Introduction – A step towards a Digital Journey through the Cloud

In an increasingly digitized world, technology enabled transition is increasingly becoming a strategic ally for organisations and how they deliver services and products more effectively. Organisations need to orient their business along capabilities and commercially viable ‘proof points’ that can scale quickly. A key to this approach is to avoid large multi-year road maps and refrain from multi-million dollar investment projects to be locked into an uncertain future. The concept of providing a differentiated customer experience is the only way to create a sustainable advantage. A recent Oracle Partner event (Sydney PaaS Hackathon) was a perfect stage for partners in APAC to demonstrate the power and capabilities of the Oracle Cloud Platform to accelerate a customer’s digital transformation journey. Now that the dust has settled on the amazing Sydney Hackathon where we participated and won the second prize for our prototype using Oracle PaaS products, it is time to share what we actually built and how. In this opening blog, I will provide a glimpse of the end to end solution that we put together using nine different Oracle Cloud Services in the 28 hour marathon. Along the way, I would also share the best practices and lessons learnt of integrating the different Cloud Service and an approach to building solutions around user centric design principles. The core prototype and use case was around building a real time remote patient well being monitoring system. In particular, the solution centered around patients suffering with blood pressure related disease which is a major cause of health related deaths in Australia.

Before describing the end to end solution, it is appropriate to describe the real problems that patients with blood pressure related disease suffer. This would provide some key drivers behind the solution that we have implemented. Let’s have a quick look at some of the frustrations sufferers have to deal with while pro-actively managing their blood pressure:

- Forgetfulness – Lifestyle changes are very important in managing high blood pressure. But lifestyle changes along do not help. Patients have to regularly take their blood pressure readings and prescribed drugs to subdue prolifiration of their condition. Forgetting to take timely readings or medication is a leading cause of sudden anomalies in blood pressure readings.

- Routine Health Checks – With the busy lives most people have today it can be really hard to fit in regular visits to the pharmacy for routine blood pressure checks.

- Uncertainty – People with high blood pressure are at risk of suffering from untimely stroke and heart attacks. They often visit their medical practitioners in reaction to an event or during a scheduled appointment as opposed to being looked after and being proactively advised.

Use Case – Remote Patient Monitoring and Connected Care

We built a Patient Monitoring System to manage personal health (specifically blood pressure) that remotely connected patients with their community pharmacists and medical practitioners allowing proactive management of their well being. This will be quite a read but if you manage to stay till the end, the blog will provide you with a method to identify and innovate on real world problems that have been ignored for long. Upon exploring the use case further, we identified 4 key personas beginning with Bruce Wayne (our patient).

- Bruce suffers from high blood pressure and struggles to visit his physician and pharmacist for regular blood pressure check ups. He also forgets his pills and is uncertain how this will effect his health and well being in an unforeseen event.

- Jenny is Bruce’s local community pharmacist. She collects and review’s Bruce’s blood pressure data and makes her assessment for the next course of action.

- Jenny like to consult with a qualified medical practitioner such as a physician or a cardiologist from time to time when there is a critical reading or when she spots an anomaly and needs a second opinion.

- Lisa who is a medical practitioner believes that with the right technology solution, she can make more informed and proactive decisions for her patients without meeting them in person.

- Finally, our fourth persona is the State Health Department that has always expressed a desire to collect well being information anonymously to identify key geographical clusters associated with higher risks of heart attacks.

| Role/Persona |

Background/Job Function |

Important Needs |

|

Patient

(Bruce Wayne)

|

- Works as a Project Manager

- Often has to work under stressful circumstances

- Has a family history of heart related disorders

- Suffers from Hypertension (High Blood Pressure)

|

Bruce would benefit from being able to take remote blood pressure reading which is automatically sent to his pharmacist to minimize routine travel. He uses technology heavily and would not mind notifications to remind him when he misses to take his reading and prescriptions. He is also happy to be proactive consulted and advised from his health practitioner and pharmacist when there is a problem. |

|

Pharmacist

(Jenny Andrews)

|

- Works in a Pharmacy as an Community Pharmacist

- Collects and records Blood Pressure Readings for routine patients

- Prepares medications by reviewing and interpreting physician orders

- Controls medications by monitoring blood pressure and advising interventions

|

As a pharmacist, Jenny would like to automatically detect and manage information regarding when any of her community patient has a critical blood pressure reading so that she can take the next proactive action. |

|

Medical Practitioner

(Lisa Brown)

|

- Diagnosis, monitoring and treatment of high blood pressure

- Physical Examination of Routine Patients

- Inform patients about their blood pressure and how they can make lifestyle changes to prevent or improve hypertension

|

As a medical practitioner, Lisa wants to be able to analyse her patients well being records over a period of time so that she can detect patterns, anomalies and exceptions. |

|

State Health Department

|

- Targeted prevention activities for disadvantaged populations

|

The state department of health is interested in the analytics side of things. It would be great if they can correlate high blood pressure to a geographical region, weather condition or time of the year. |

The Remote Health Monitoring prototype discussed in the rest of this blog aims to address the core challenges discussed earlier. We put our thoughts in action by integrating close to 10 Cloud Services in 28 hours for our use case. Oracle IoTCs, ICS, MCS, Application Container Cloud Service (BICS), APICS, Business Intelligence Cloud Service (BICS), and PCS were the core Cloud services that were the heart of our solution.

Solution at a Glance : myHealth

For the Hackathon, we created a fully functional and cloud integrated application called myHealth. The key objective of myHealth was to shift patient healthcare from cure to prevention. The overarching solution emphasized on building an infrastructure to continuously oversee the vital parameters of a patient and provide necessary alerts and interventions at near real time by leveraging IoT, services and a digital platform in a cost effective way.

Oracle Cloud Services in Action

As we wanted to remotely measure and stream health data, process it, and analyse it in real time to detect and react to an anomaly, Oracle Internet of Things Cloud Service was the ideal choice to be at the heart of the solution. Of the numerous blood pressure monitors available today, we used iHealth Sense Wrist wireless devices. Once a patient would take a reading from a this device, it would instantly push that data onto the device cloud. We accessed the iHealth APIs to create a web-hook to publish this stream of data into the IoT Cloud using Application Container Cloud Service and Integration Cloud Service. This is where interesting things would happen:

- Using IoT Cloud Service, we built explorations which were visual representation of real time data coming from remotely registered devices/gateways for insightful data interrogation. The explorations were built to refine and sanitize incoming real time data stream using a few out of the box patterns such as eliminating duplicates and detecting fluctuations and define criteria to filter critical events from the normal.

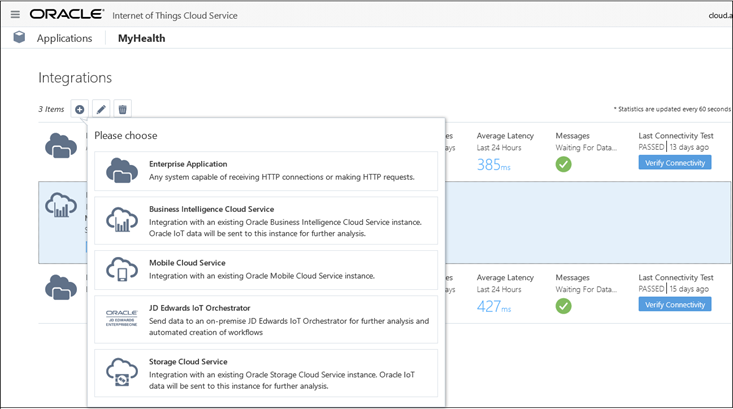

- Once explorations were published, it becomes available as one of the message formats to choose from when configuring an IoT Application’s integration with external services, such as Oracle Business Integration (BI) Cloud Service, Oracle Mobile Cloud Service or an enterprise application based on HTTP endpoints.

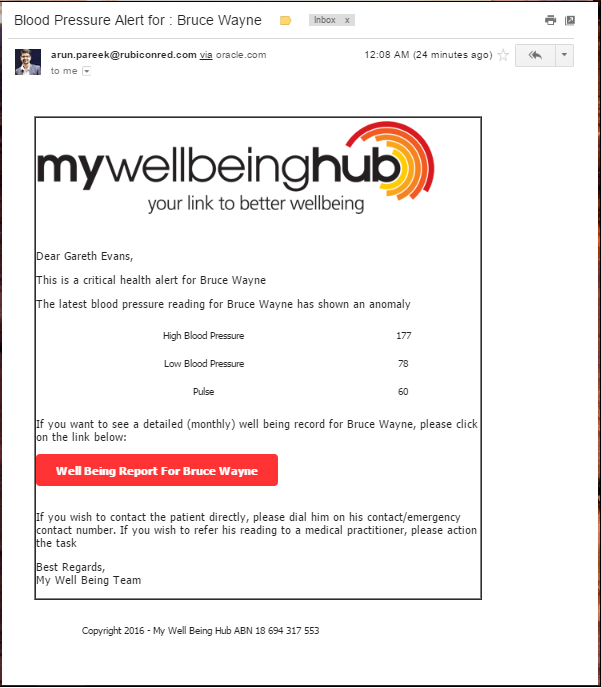

- After isolating critical events from the data stream that required manual intervention, a process flow is triggered in Oracle PCS where a pharmacist or a medical practitioner can assess the anomaly and take further action such as provide phone consultation, schedule and appointment, renew/change a prescription, issue referrals, order meds, etc.

- We also created a myHealth mobile app in Oracle MCS that would allow for push notifications to a.) Patients when they have forgot to take their readings or leave a perimeter (area of residence and b.) Pharmacists and doctors when a process is triggered due to a critical reading.

- Finally we created a range of predictive and analytical reporting dashboards leveraging Oracle Database Cloud Service (APEX), Business Intelligence Cloud Service and Data Visualization Cloud service.

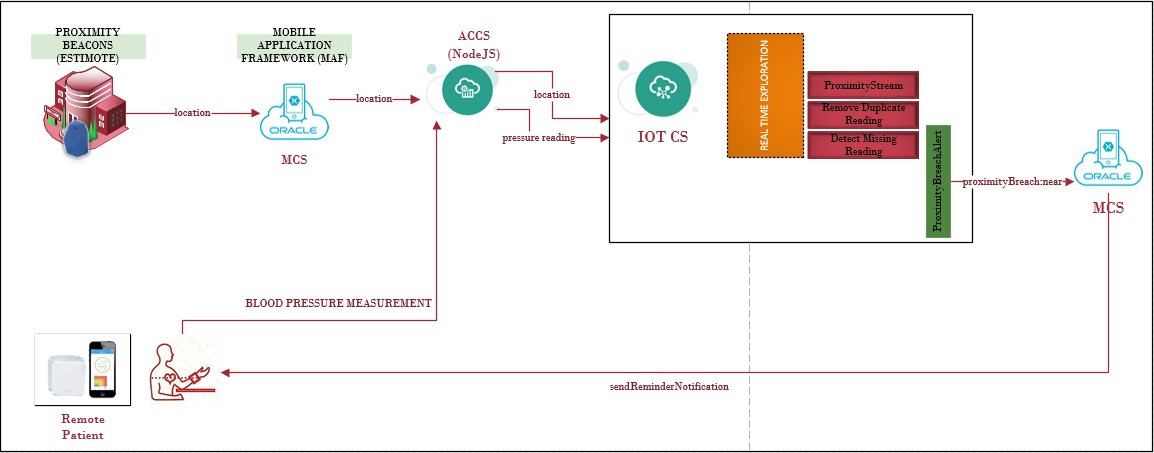

Here is an overall connected architecture for the use case. The entry point and heart of the solution is the Internet of Things Cloud Service (IoTCS). Patients can self measure their blood pressure readings remotely through tele-monitoring devices that collect and send data to either an IoT server directly or through a gateway.

This blog aims to explains how we specifically used Oracle IoTCS that fascinated us and is where we built quite a lot of our components and was at the heart of our use case. Hopefully the use case will present by itself.

Oracle Internet of Things Cloud Service (IOTCS)

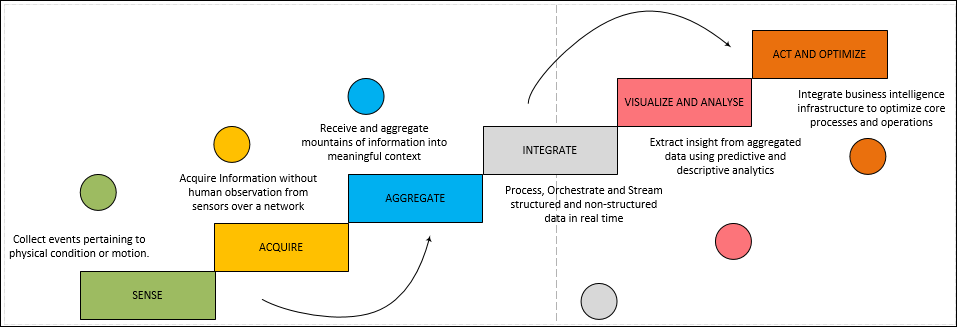

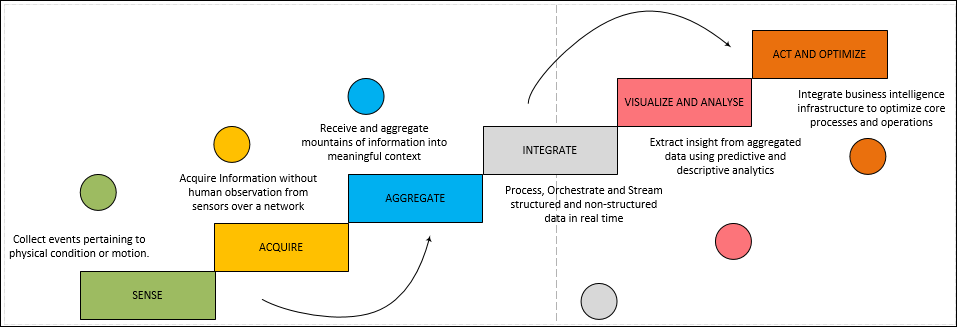

The IoT Information Value Loop

The Information Value Loop mode shown below can serve as the cornerstone for an approach to IoT solution development for potential use cases. This model provides discrete but connected stages. An action in the real world allows creation of information about that action, which is then communicated and aggregated across time and space, allowing analysis of the data in the service of modifying future acts.

A Quick Primer to Oracle IoT Cloud Service

To realize the use cases for myHealth to the information value loop model depicted above, we were able to utilize Oracle IoT Cloud Service to:

- Collect proximity information about a patient’s movement i.e. to check if the remote patient is leaving his house.

- Collect blood pressure and pulse measurement about a patient.

- Register directly connected devices (Beacons and Blood Pressure Monitors) or Gateways so that information can be collected from these devices remotely in real time.

- Perform real time pattern matching on data streams to sanitize information by eliminating duplicates, detecting missing events, etc.

- Perform data aggregation, filtering and correlation on multiple streams to generate business events.

- Raise message alerts based on business events.

- Define external integration touch points to invoke systems, services and process to act on critical events.

- Remotely monitoring devices and assets.

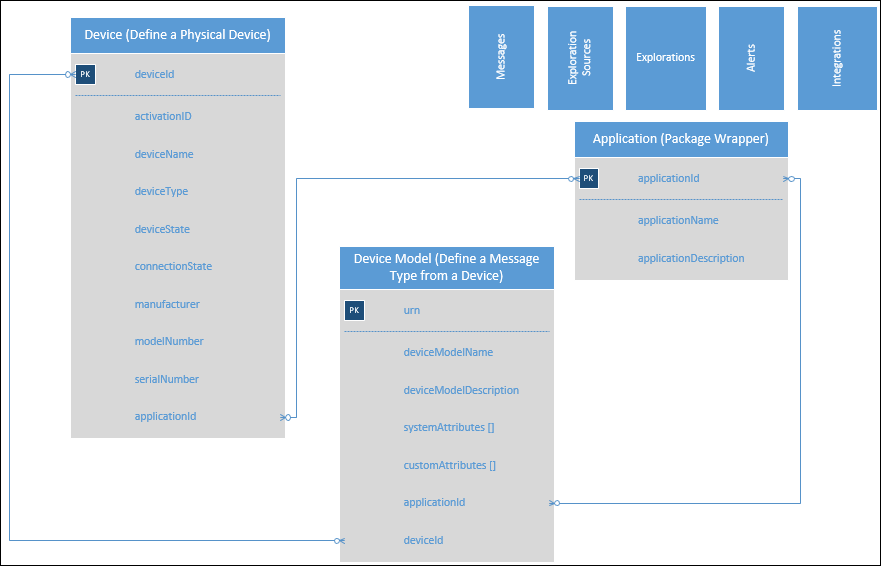

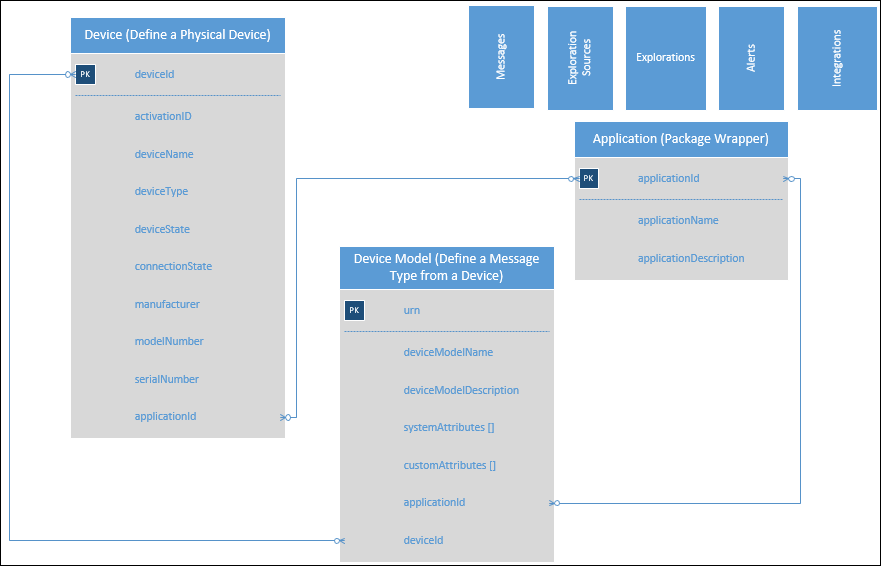

Among the various components of Oracle IoTCS, here is a quick diagram explaining the core components and the relationship between them.

In addition to the above server side artifacts, Oracle IoTCS provides a ranges of client APIs for platforms such as C, Java, JavaScript, and Android for devices to activate, register and send messages to an IoT server. Devices can either connect directly to the IoTCS server or they can connect to a Gateway which in turn communicates to the IoT server. Once connected, a device can begin to send messages to the IoT server based on a pre-defined Device Model definition (analogous to a message contract).

Oracle IoT CS Device Models – Registration and Activation

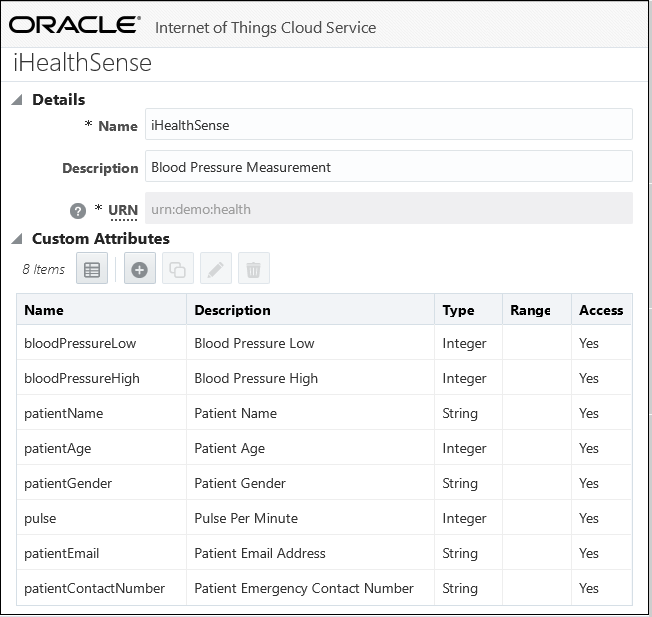

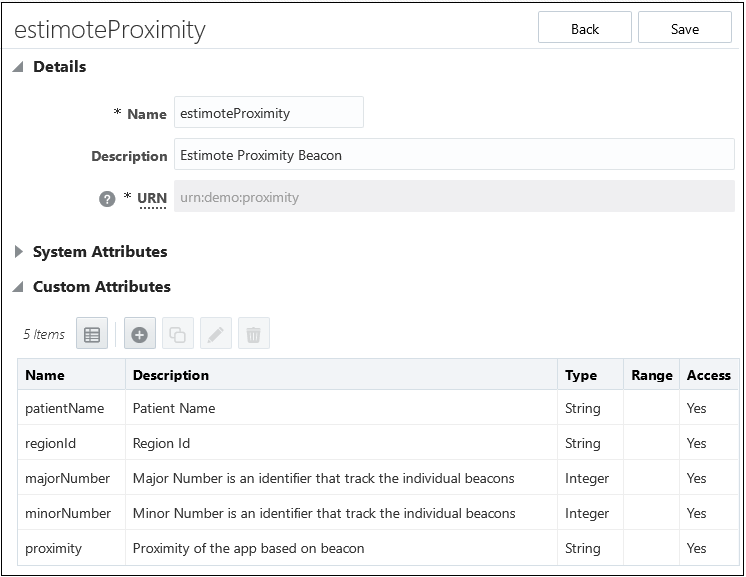

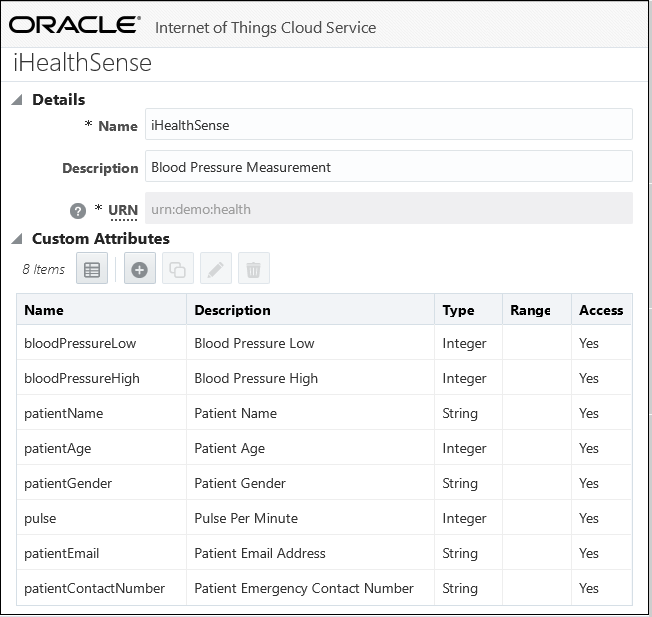

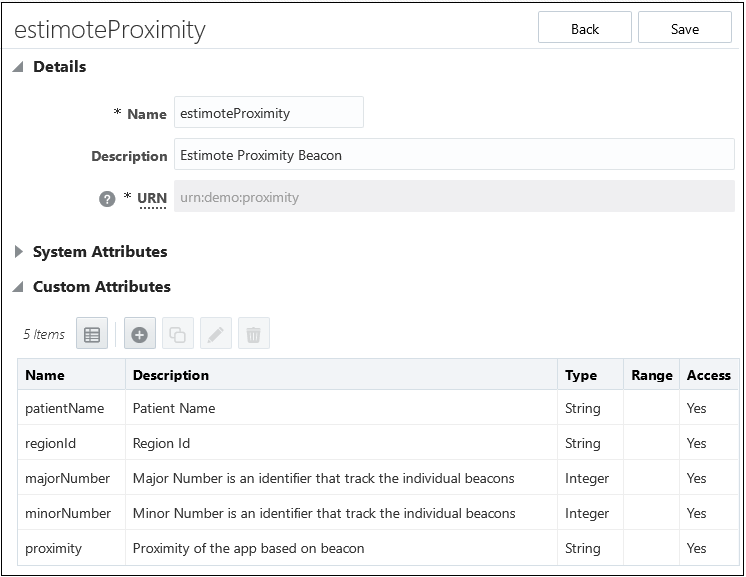

For our myHealth IoT application we created two device models to collect patient health readings and proximity readings respectively.

- iHealthSense – This device model (urn:demo:health) was created to define a message format for the blood pressure reading received in the IoT server through any devices that capture this information. In addition to the predefined System Attributes, a device model allows creating Custom Attributes through the console. This allows specifying a message format that can be published from any device that uses this device model.

- estimoteProximity – This device model (urn:demo:proximity) defines a message format that any registered proximity devices such as Beacons use to send messages to the IoT server. Beacons can relay geographical coordinates as well as proximity of a device/person/instrument from them. In addition, certain Beacons can also send temperature, humidity as well as images.

The next step for us was to create, register and activate Devices that can use these device models and communicate with the IoT server. Creating a Device in the IoT server is a two part process.

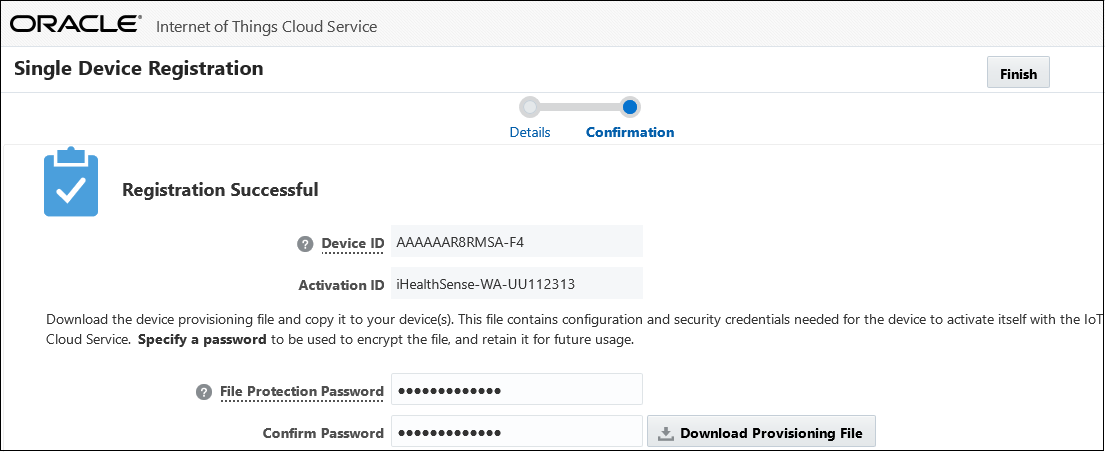

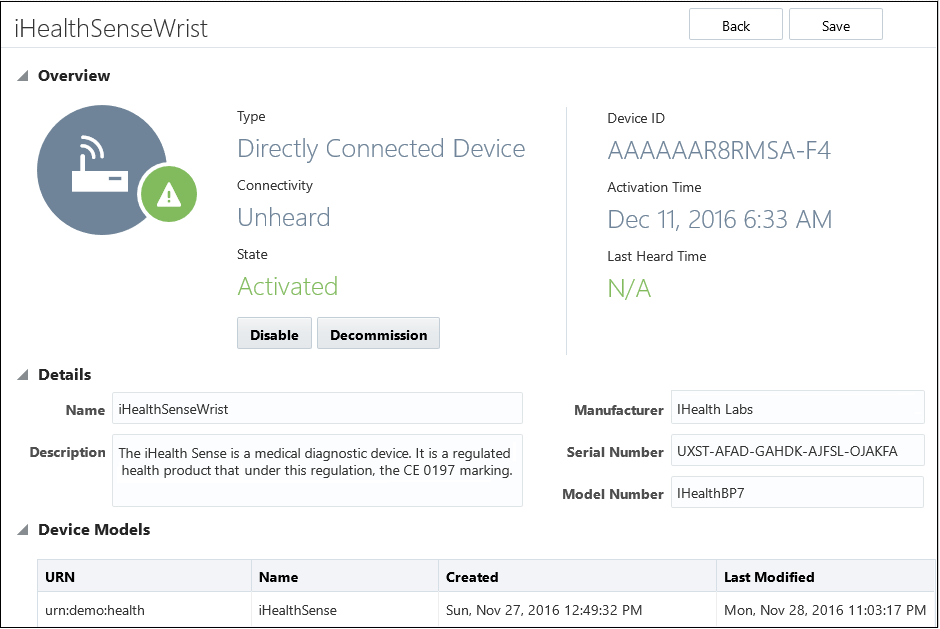

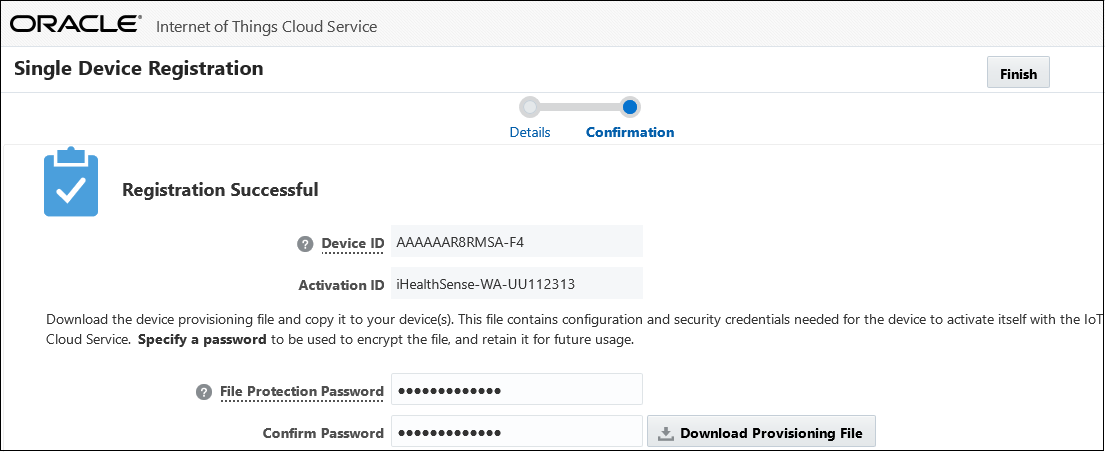

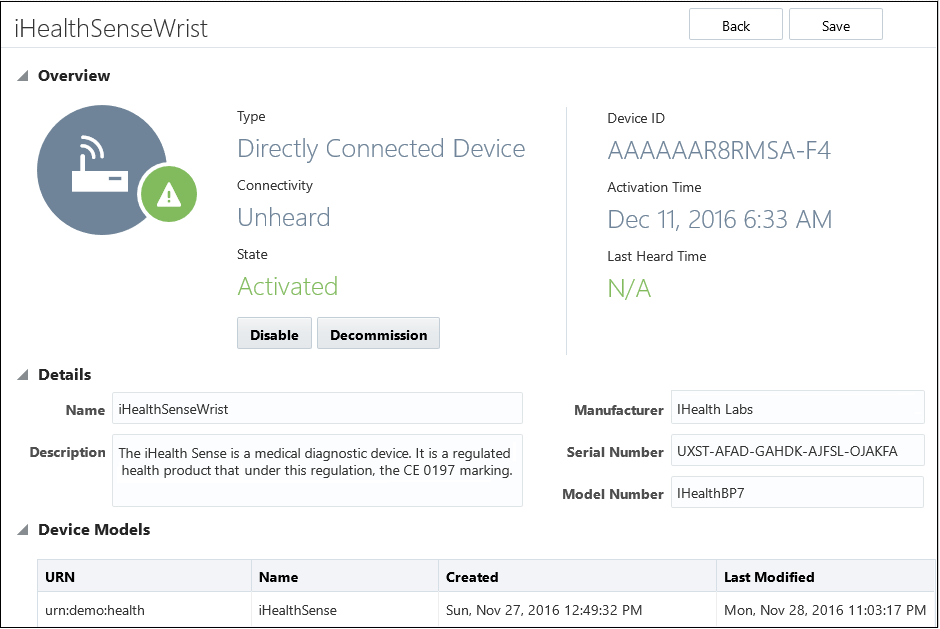

- Registering a Device – Registration of a device is done in the IoT CS console. The following image shows how a single device is being registered by entering appropriate meta data level information about the device.

Once the device registration details are saved and successful, Oracle IoT CS server will create a unique Device ID based which can then be used by the actual physical device APIs to send and receive messages from IoTCS server. A provisioning file is also created on the web console that stores an encrypted connection credentials required to connect to this device and activate it on the IoT server through the IoT APIs. Any client side API’s would then require this provisioning file to interact with the activated device. The provisioning file is a configuration file that contains a hashed password, the URL of the IoT CS server and the device activation id registered on the IoT server. When this provisioning file is downloaded, a file protection password needs to be specified which will be used to fetch the connection details in the hashed file.

Application Container Cloud Service

When we began looking into the best ways to push data in IoT, we realized that the NodeJS IoTCS APIs are 100 times faster than the Rest APIs. We implemented the client APIs used to communicate with the Oracle IOT CS server using Node.js. Both Oracle MCS and Oracle Application Container Cloud Service provides containers to run and execute Node code.

Setting up Node.js and Node Package Manager

Node.js can be downloaded from here and the IoT CS client libraries can be downloaded from here. Depending upon your preferences, you can either use a standard text editor to write the Node.js code or use an IDE that supports it. Next install the client libraries as global libraries through Node Package Manager (node-pm) using the following command.

npm install forever -g

- Activating a Device – When a remote device sends a message to the registered device in the IoT CS, it is automatically activated. The following is a code snippet in Node.js using the Oracle IoTCS Node.js libraries that connects to a remote server as a standalone client using the provisioning file and activates a device. Once a device is activated, it can send and receive messages.

dcl = require("device-library.node");

dcl = dcl({debug: true});

//This is the code to load the provisioning file for the device downloaded from the IoT Server

dcl.oracle.iot.tam.store = ("iHealthSense-WA-UU112313-provisioning-file.conf");

//Password specified when downloading the provisioning file

dcl.oracle.iot.tam.storePassword = ("#Musicrules12");

var myModel;

var virtualDev;

// Create a directly connected device and activate it if not already activated

var dcd = new dcl.device.DirectlyConnectedDevice();

if (dcd.isActivated()) {

dcd.close();

} else {

dcd.activate(['urn:demo:health'], function (device) {

dcd = device;

console.log(dcd.isActivated());

if (dcd.isActivated()) {

dcd.close();

}

});

}

Save the above code snippet as DeviceActivatation.js, go to a command prompt (assuming you are using windows) and type

node DeviceActivation.js

If we check the state of the device in IoT CS server, it would be Activated. The device activation also associates the selected device model with the device. This means that the device can begin accepting messages in the format described in the device model.

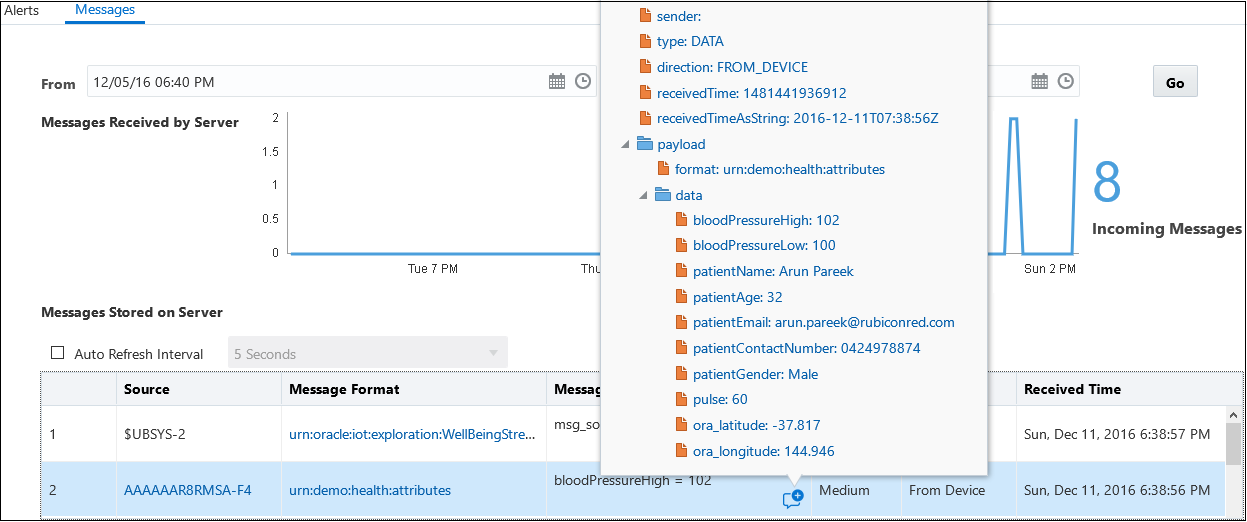

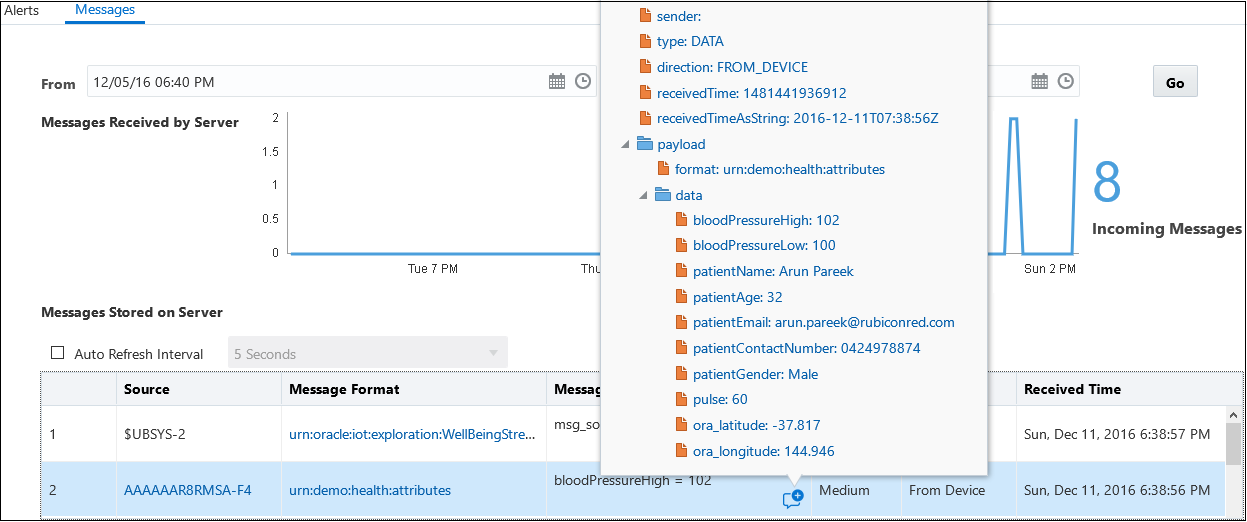

- Sending Messages to a Device – After a device has been activated, the following Node.js code fragment, acting as a remove directly connected device, can be used to publish a message to the IoT CS server.

dcl = require("device-library.node");

dcl = dcl({debug: true});

dcl.oracle.iot.tam.store = ("iHealthSense-WA-UU112313-provisioning-file.conf");

dcl.oracle.iot.tam.storePassword = ("#Musicrules12");

var myModel;

var virtualDev;

// Create a directly connected device and activate it if not already activated

var dcd = new dcl.device.DirectlyConnectedDevice();

if (dcd.isActivated()) {

getHWModel(dcd);

} else {

dcd.activate(['urn:demo:health'], function (device) {

dcd = device;

console.log(dcd.isActivated());

if (dcd.isActivated()) {

getHWModel(dcd);

}

});

}

// Display the device model

function getHWModel(device){

device.getDeviceModel('urn:demo:health', function (response) {

console.log('-----------------MY DEVICE MODEL----------------------------');

console.log(JSON.stringify(response,null,4));

console.log('------------------------------------------------------------');

myModel = response;

startVirtualHWDevice(device, device.getEndpointId());

});

}

//Send Message to the Device based on the Device Model Attributes

function startVirtualHWDevice(device, id) {

var virtualDev = device.createVirtualDevice(id, myModel);

var bpHigh = Math.floor(Math.random() * (180 - 100)) + 100;

var bpLow = Math.floor(Math.random() * (120 - 60)) + 60;

console.log('High BP'+ bpHigh);

var newValues = {

bloodPressureHigh: bpHigh,

bloodPressureLow: bpLow,

patientName: "Arun Pareek",

patientAge: 32,

patientEmail: "<a>arun.pareek@rubiconred.com</a>",

patientContactNumber: "0424978874",

patientGender: "Male",

pulse: 60,

ora_latitude: -37.817000,

ora_longitude: 144.946000

//timeStamp: new Date().toDateString()

};

virtualDev.update(newValues);

virtualDev.close();

};

Once a message is received for a device, it can be audited in the IoT CS service console. Pretty simple and powerful. The Node.js code can also be tweaked to send a bulk message to the device.

Aggregation, Analytics and Actions using Oracle IoT CS

Data collected from multiple sensing devices come in different formats and at different sampling rates—that is, the frequency at which data are collected. Oracle IoT CS allows creation of data exploration sources that can collect structured raw data and present it as a continuous stream.

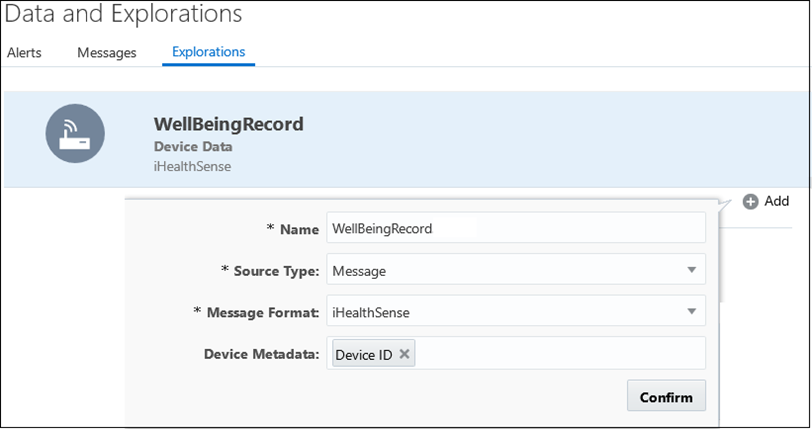

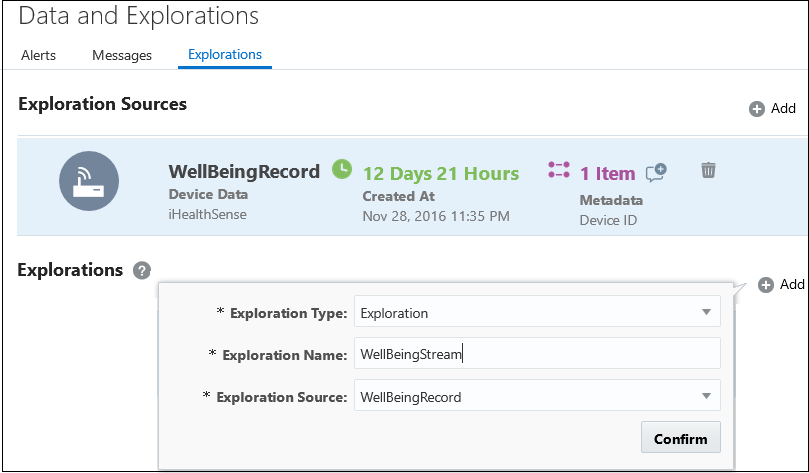

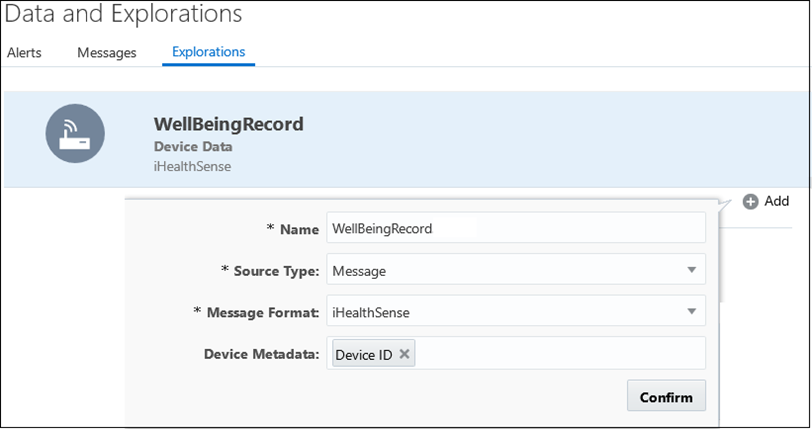

Exploration Sources

An exploration source can be created in an IoTCS application based on a device model. This exploration source will read and store the raw data that is received in the IoT server from the remotely connected device. The exploration source message format is based on a pre-defined device model defined in the IoT application.

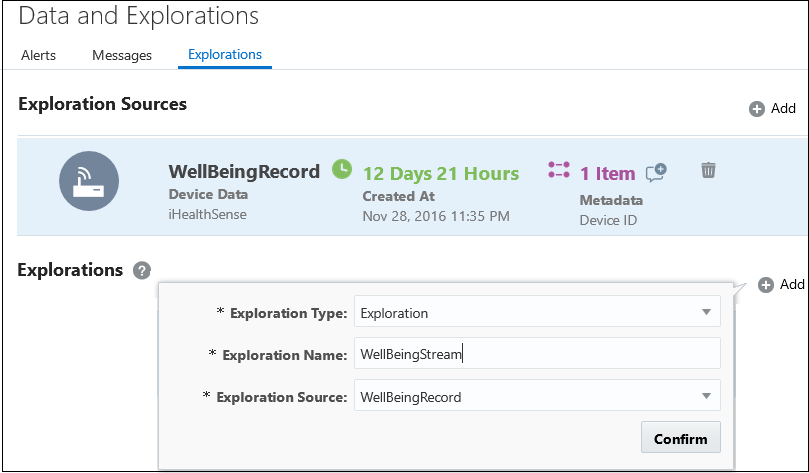

Explorations

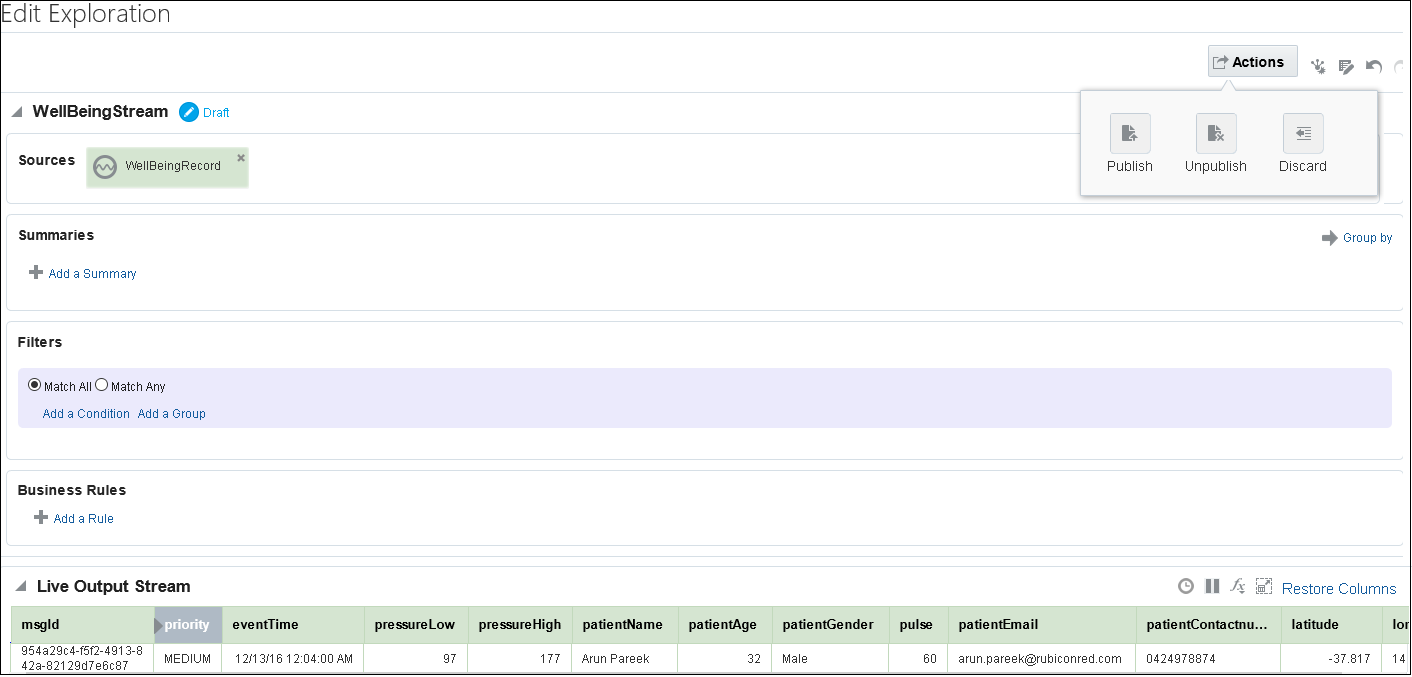

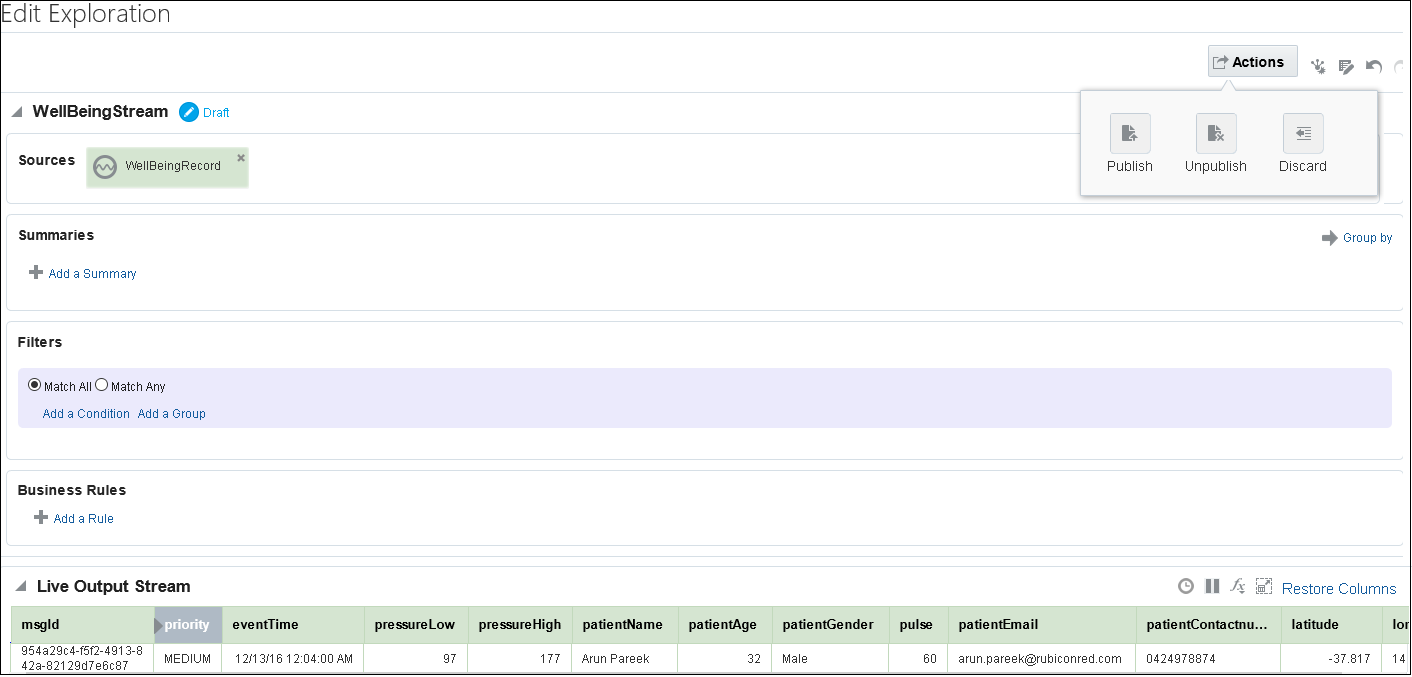

A message exploration can be used to analyze and pattern match data from different exploration message to provide a real time stream of messages. Explorations in Oracle IoT Cloud Service are very powerful as they support message aggregation and analytics through a number of pre-defined patterns (A pattern is a template that already has the business logic built into it) such as duplicate elimination, missing event detection, fluctuation detection, etc. An exploration is created based on an exploration source.

One an exploration is created, it is in an unpublished state. Explorations are visual representation of real time disparate event data flows for insightful data interrogation from IoT devices. Explorations provide a number of feature such as correlating, filtering and grouping for managing data streams in real time. These data functions can be aggregated on a time or event based window and used in creating a summary view of data. Business rules can also be defined in explorations to review incoming events and apply business logic to generate resulting data fields that can be displayed in tabular or graph forms. Once an exploration is edited and finalized, it has to be published for the changes to take effect. For our use case, we created a standard exploration that would stream the blood pressure and pulse reading of patients in real time.

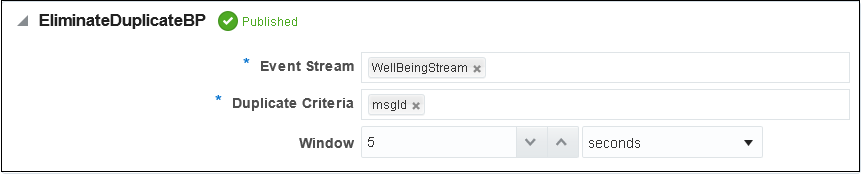

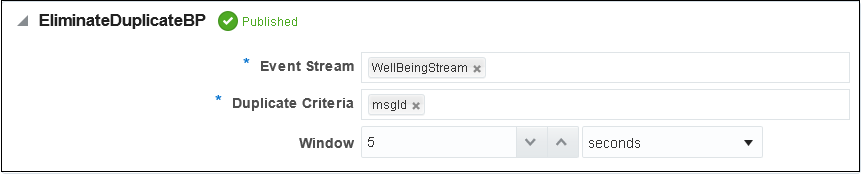

Duplication Elimination and Anomaly Detection

Explorations in an Oracle IoT Cloud Service application can be nested, which means, we can create another exploration based on an existing one. As a best practice, it is always recommended to not add any business logic in the base exploration but to create a derived exploration from it. The pipeline shown below provides the necessary abstraction for the core explorations used for analytics from the source explorations.

The below exploration is merely built on the previous exploration but is based on the duplicate elimination pattern. This exploration will only stream data events that has a unique msgId. The window specifies the time period or interval until which the pattern will be active for each incoming message.

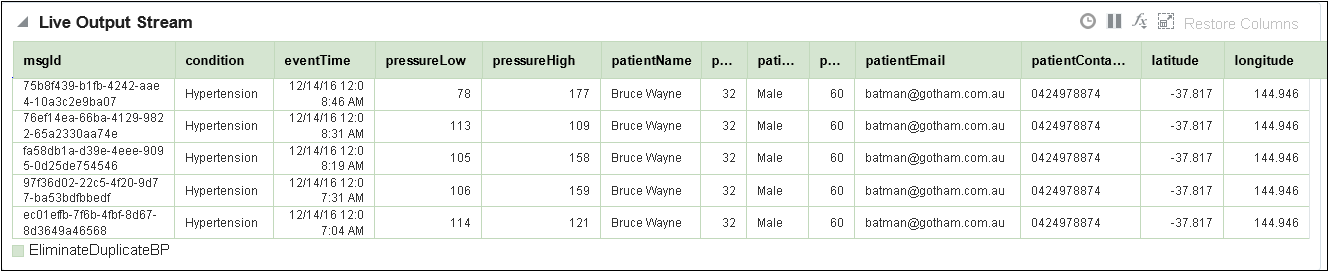

Adding Business Logic to Explorations

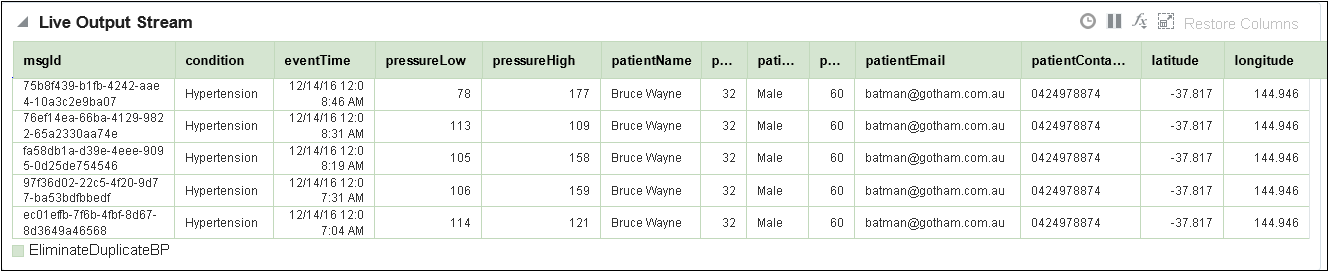

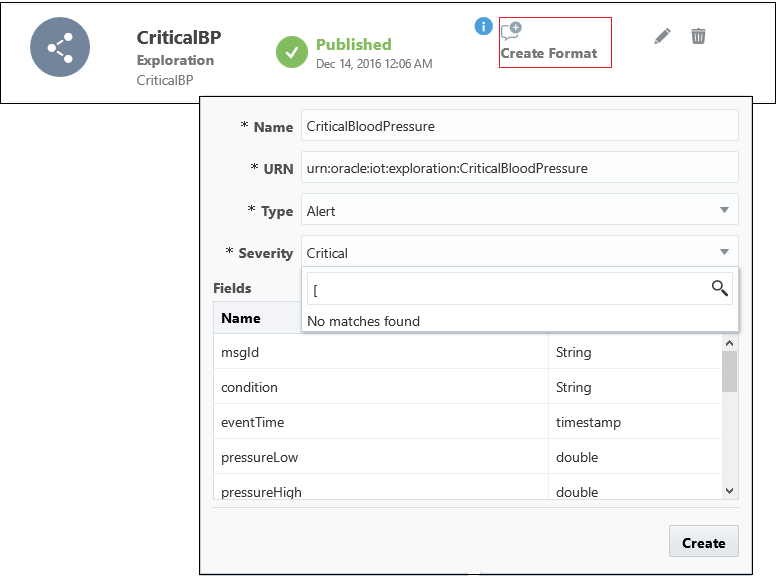

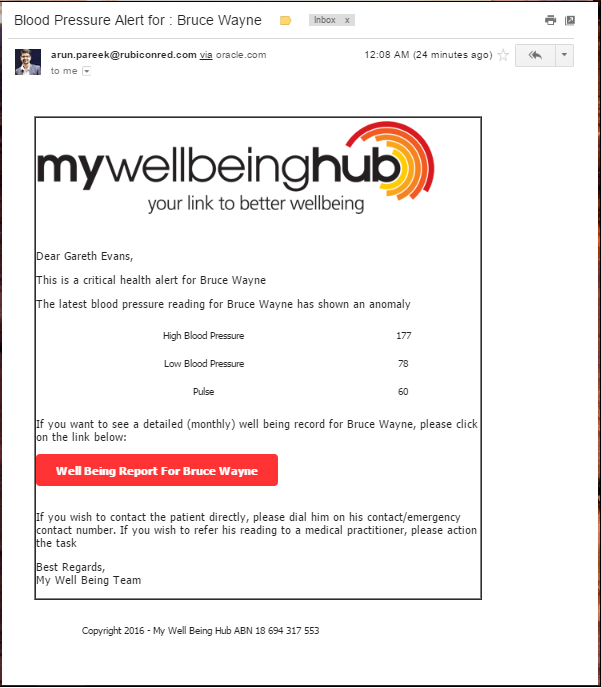

Finally, the last nested exploration if the CriticalBP stream that is built up on the EliminateDuplicateBP as the exploration source. Filters can be applied on exploration so that the stream only generates data events of particular importance. For instance, blood pressure readings where the systolic pressure is greater than 140mm or less than 90mm is considered critical and could lead to hyper or hypotension. These blood pressure readings should raise an alert that can be viewed by and acted upon by a healthcare professional.

The output exploration, in its published state, shows a real time critical blood pressure events for different patients along with their geographical coordinates (source of the reading) and contact information. Data from remote devices is pushed to this live output stream such that it would constantly refresh when a new event arrives rather than manually refreshing the view.

Getting a Proximity Alert

As I indicated before, there are a number of additional use cases that can be implemented with the core use case of remote patient monitoring. When we were at the Hackathon, we interviewed a few people who had problems with blood pressure to know their key pain points. The biggest one across many people was forgetfulness, i.e. forgetfulness to take readings on time and take prescribed drugs/renew prescription. We needed a solution to remind a patient to take their readings on time. But sometimes reminder alone for a blood pressure reading is not sufficient as the patients need to have their reading device on them. One way to solve this is by using beacons to track the proximity of a patient from his home.

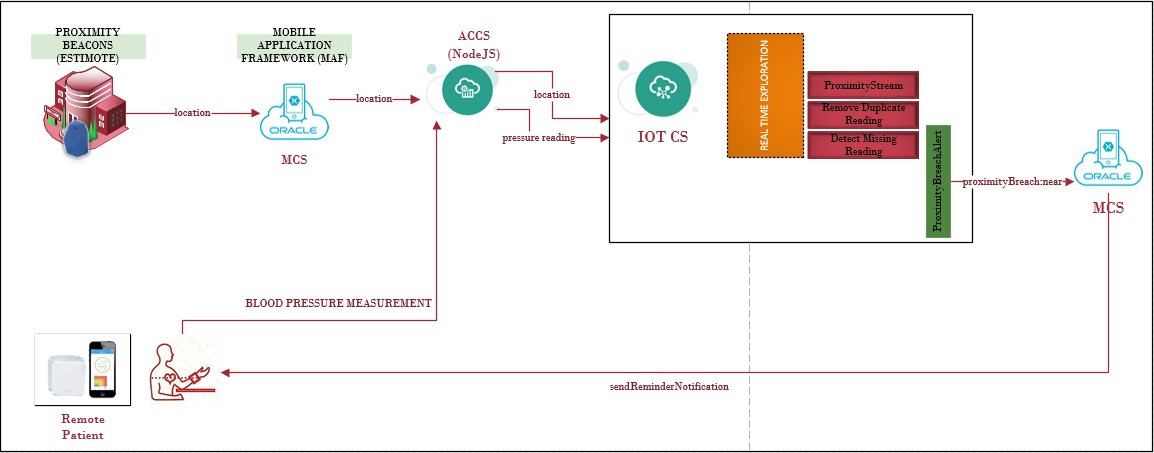

Visualize the high level message flow diagram below. if a beacon is attached to a patient’s house door or exit, it keep sending proximity information to the Oracle IoT CS server. When the proximity is breached (i.e. when a patient has left is house), an IoT exploration stream can then check if a reading has been taken for the day. In case of a missed reading, it can generate an alert leading to a notification on the patient’s phone. Off course, this integration would not be possible without using Oracle Mobile Cloud Service but developing this feature is a breeze.

Raising Alerts from Explorations

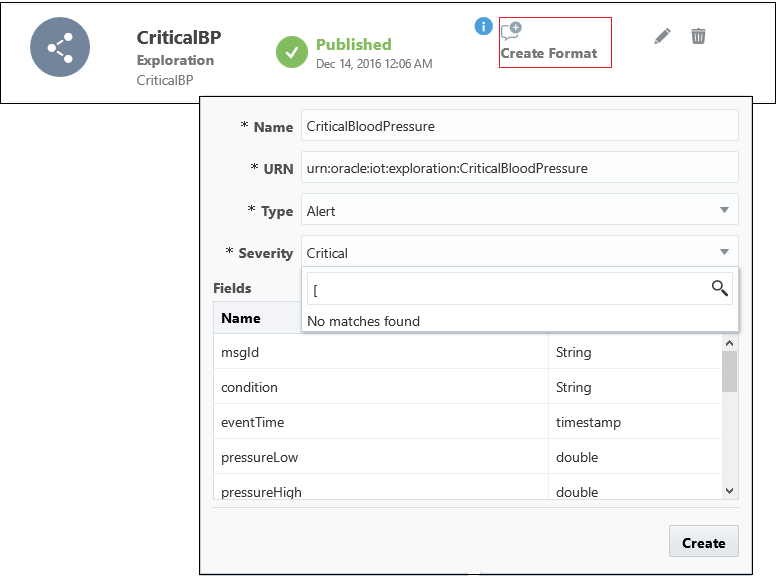

Streaming data into IoT explorations is very useful for real time data analysis for predictive intelligence and reactive actions. To react to an anomaly or an event(s) of interest, create a message format (CriticalBloodPressure) from an exploration to generate an alert of suitable severity. The event message type is based on the attributes defined or created as part of the exploration. IoT exploration attributes need not be the same as the attributes defined in the device model as they can be renamed and logical ones added.

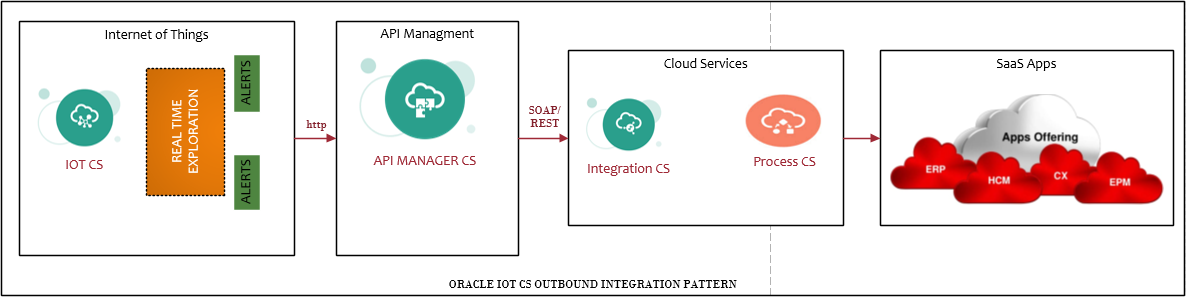

Integrating IoT Application with External Systems

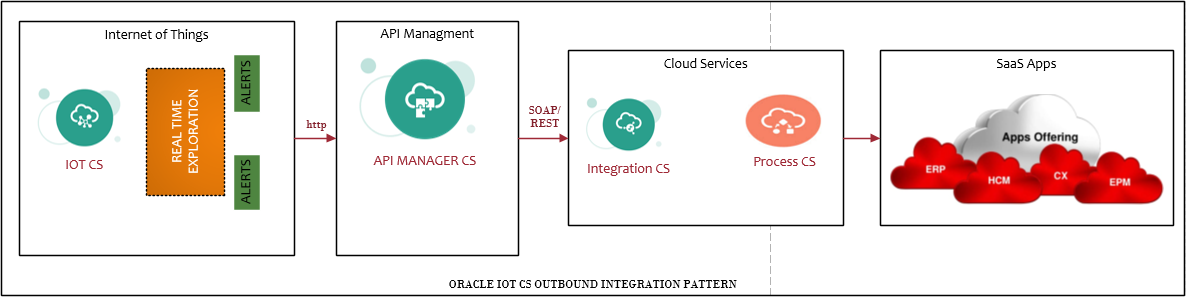

After an alert has been raised in an Oracle IoT CS application, it can forward the message to an external application in the form of a push request. As such a number of publish subscribe or one way messaging pattern can be built using IoT Cloud Service as central point for generating business events. Ensure to decouple any external integration from IoT CS through a proper service abstraction layer. Oracle API Manager Cloud Service can be very useful here to provide a gateway for outbound integration that can consume business events from IoT CS and publish them to other cloud services or SaaS applications as described in the integration pattern below.

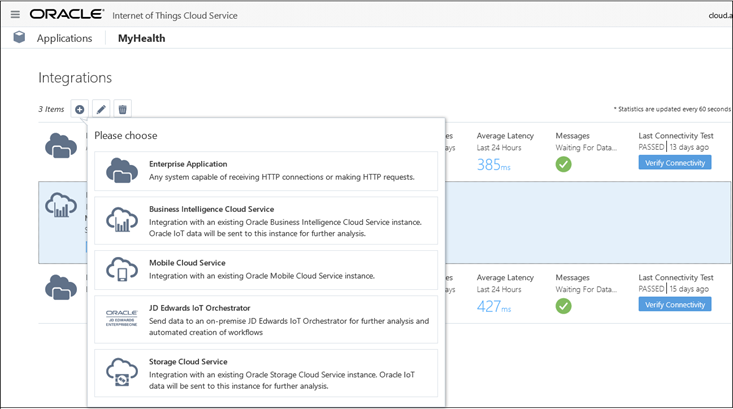

Apart from providing an out of the box integration with Business Intelligence CS and Mobile CS, it can pretty much publish a message to any external application that is capable of receiving messages over the HTTP channel. Once a HTTP pipe is established with an enterprise application, Oracle IoT CS provides an ability to confirm a connectivity test with the application. Once a connection is established, the Streams tab allows selecting a message format available in the IoT application (typically an alert) to be sent to the HTTP endpoint.

Oracle Process Cloud Service (PCS)

In the information value loop for Internet of Things explained earlier, critical business events often require human intervention. Oracle Process Cloud Service can close this loop as it can ACT on information of interest by bringing people, process and information together. Once a process consumes an event of interest, it can notify a healthcare pharmacist to analyse his or her critical reading.

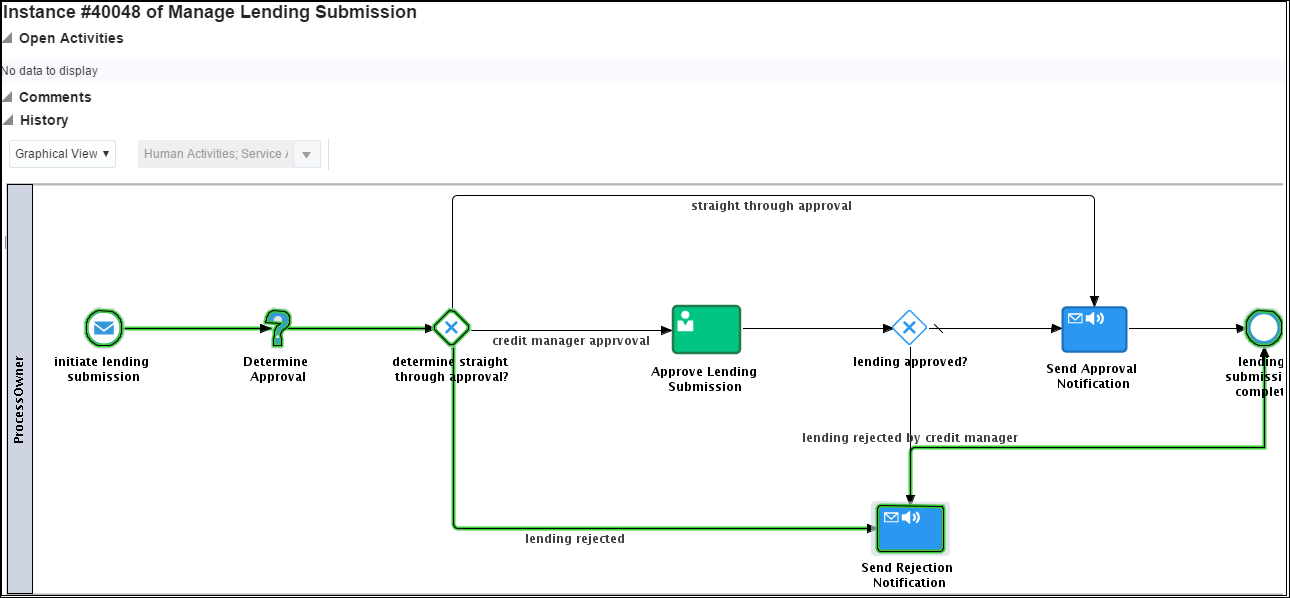

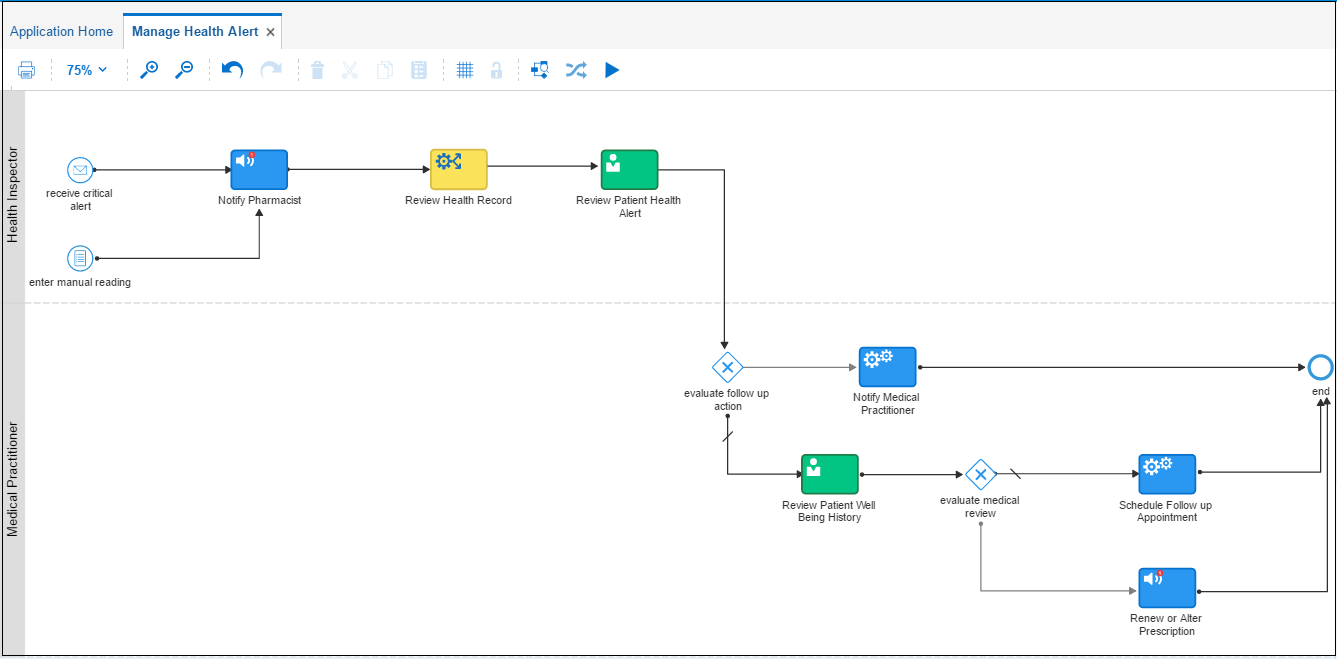

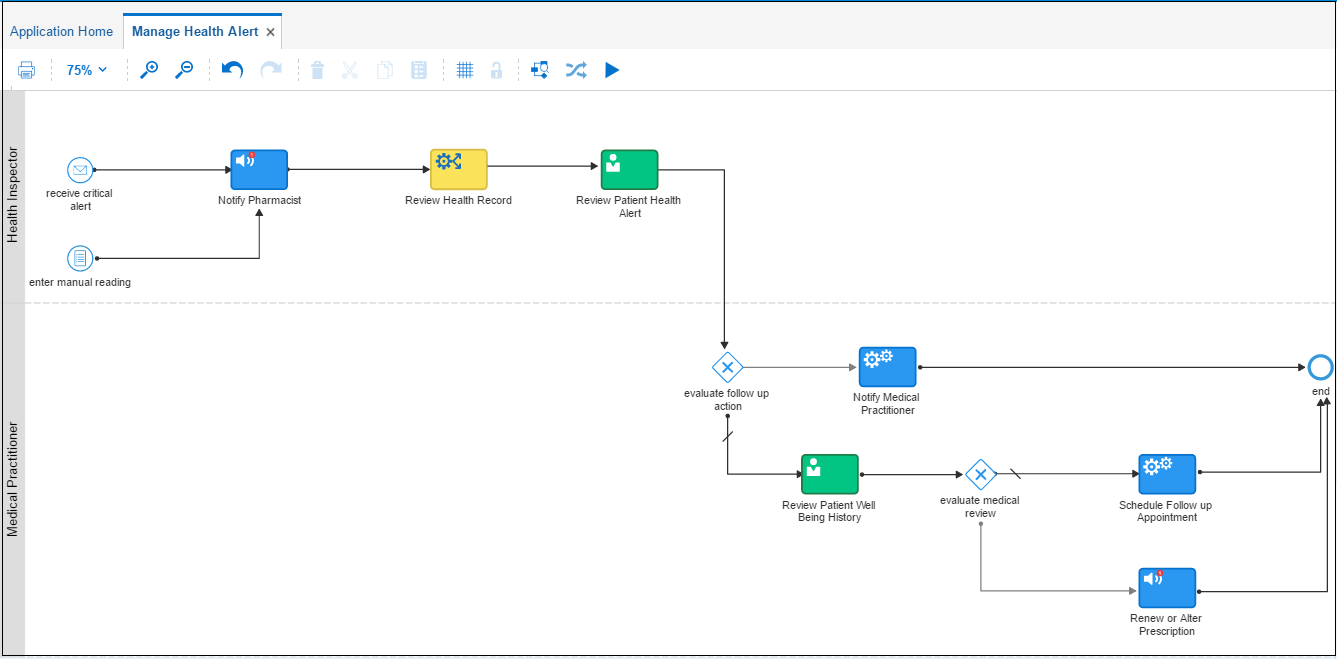

Manage Health Alert Process

One of the main challenges in device to human interfaces is that, although users have the smart devices, they minimally follow the suggested course of action and, eventually, the devices end up serving as shelfware. The Manage Health Alert Oracle Process Cloud Service process, shown below, provides an ability to integrate processes that involve devices, applications and require human intervention. The PCS process outlines a map that facilitates patient-pharmacist-medical practitioner interaction. The process allows:

- Review of critical and historical readings for detecting any existing patterns to rule out health related red-herrings.

- Pharmacists to reach out to the patient or their emergency contacts to inquire about their well being or possibly do a tele-health check.

- Automatically schedule consultation and appointments with a physician detailed check.

- Reviewing or renewing prescriptions for the patient and delivering it to them.

These are just a few among many potential human to human interaction use cases made easy through IoT and PCS integration.

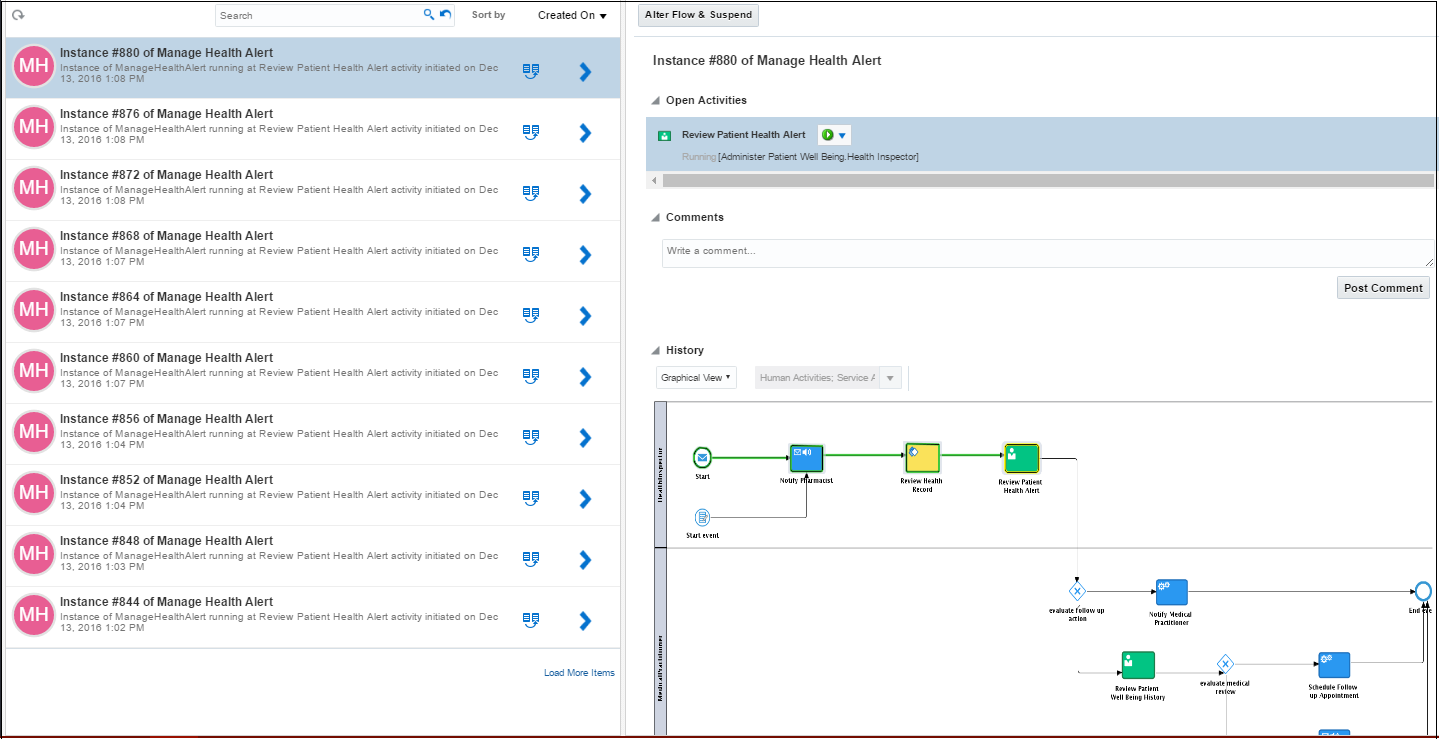

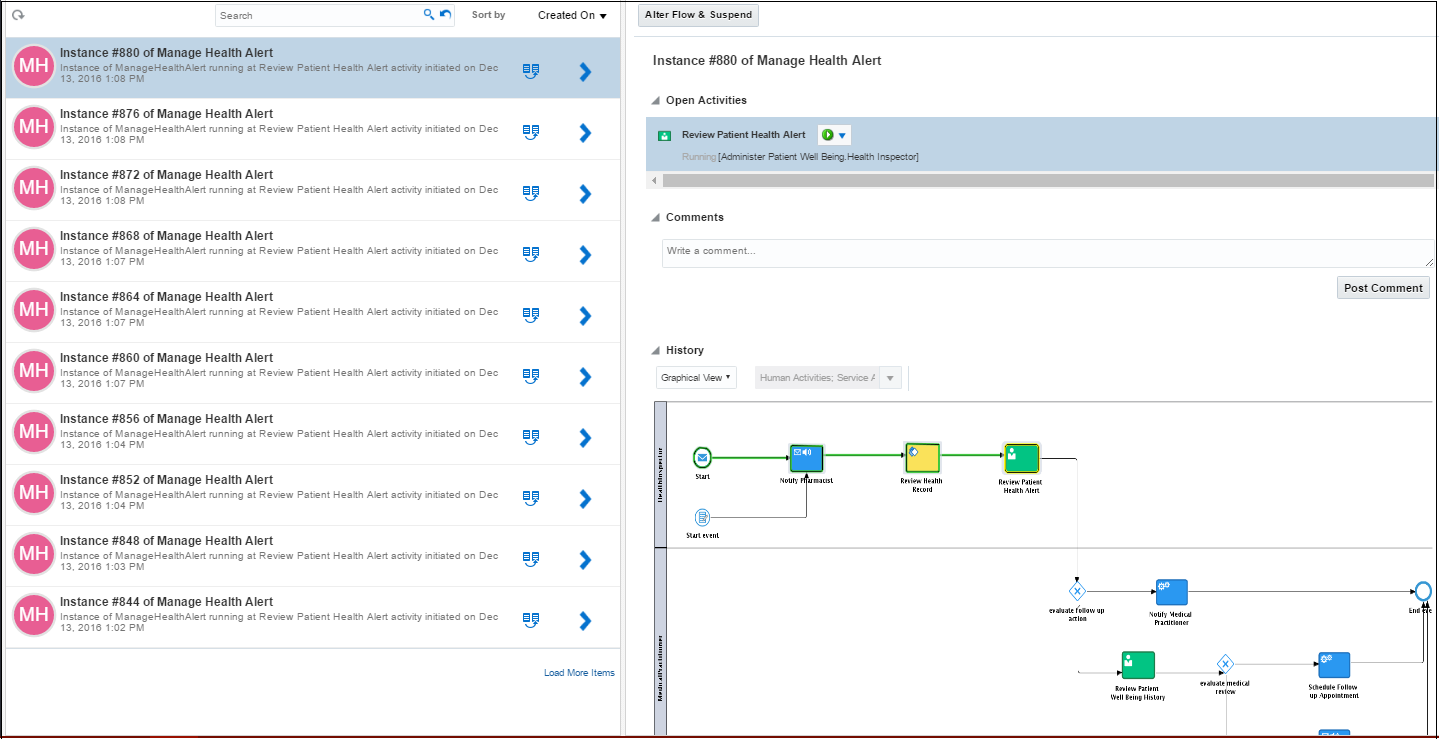

Just like its on premise sibling (Oracle BPM Suite 12c), Process Cloud Service provides an instance tracking console to view and manage live instances. The console provides a detailed graphical and activity audit trail along with an ability to act on touch points that requires human intervention.

Oracle PCS provides basic HTML5 based web forms which can define the user interface allowing end users to interact with business processes. The form composer provides an editor for creating basic forms that can present information based on the data elements defined in the process. We created the following form for pharmacists to review a critical reading for a patient in order to determine the next course of action. The data captured from an end user in the form acts as a decision point that can trigger alternative process paths.

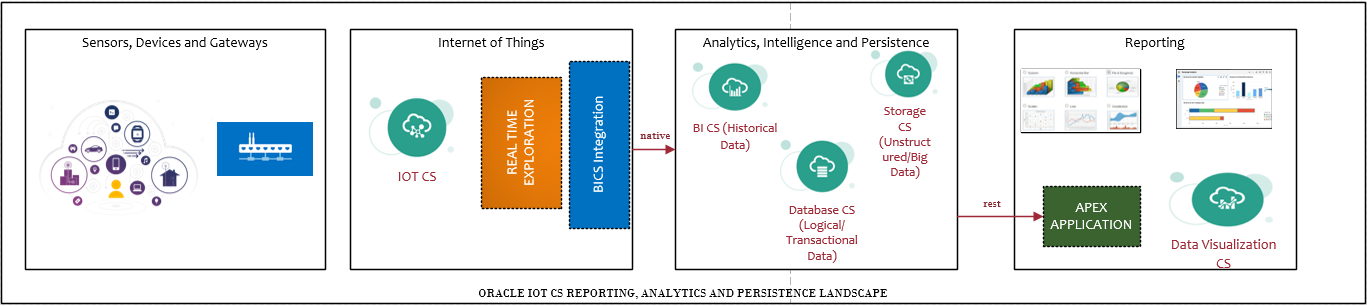

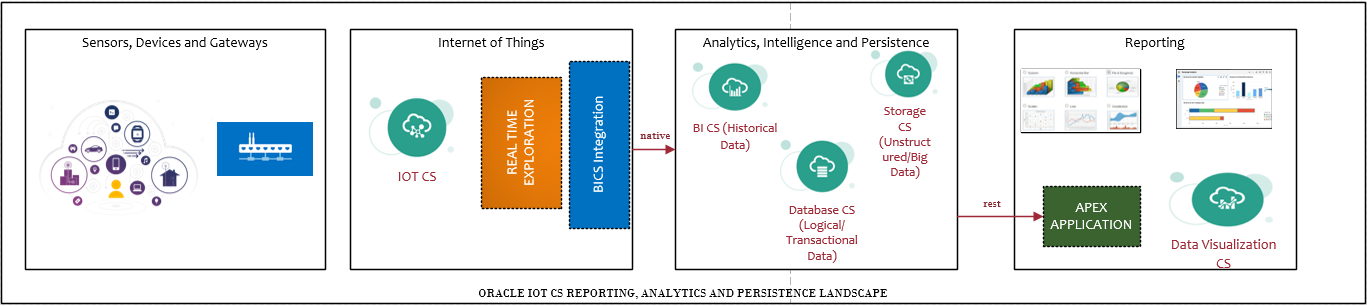

Reporting and Dashboards – Utilizing Oracle Database CS, Business Intelligence CS and Data Visualization CS

Exploratory Data Analysis is the process of sifting through the data to get a sense of whats going on. Extracting insight from data requires analysis, the fourth stage in the Information Value Loop. A derived insight is a consumable piece of information that can be used to make decisions based on current events or make future predictions. A simplified reference architecture pattern to derive analytical reporting from IoT Cloud Service is presented below:

- Structured data can be stored in transactional or historical data marts.

- Unstructured data can be stored in distributed file based databases storage platforms.

- From a reporting layer perspective, there are multiple solution components that can be used such as Apex reports, Oracle BI and Data Visualization dashboards, business activity monitoring dashboards, etc.

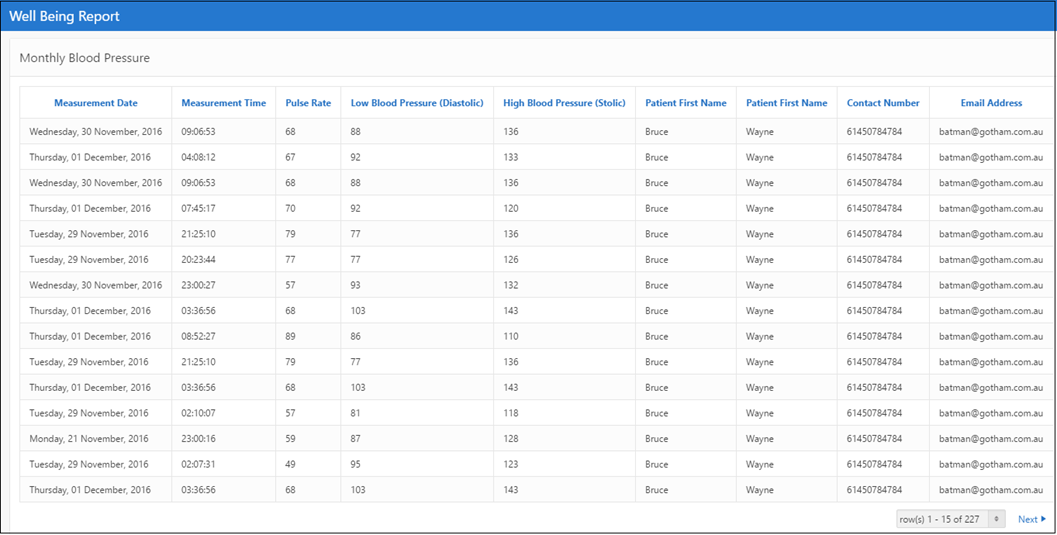

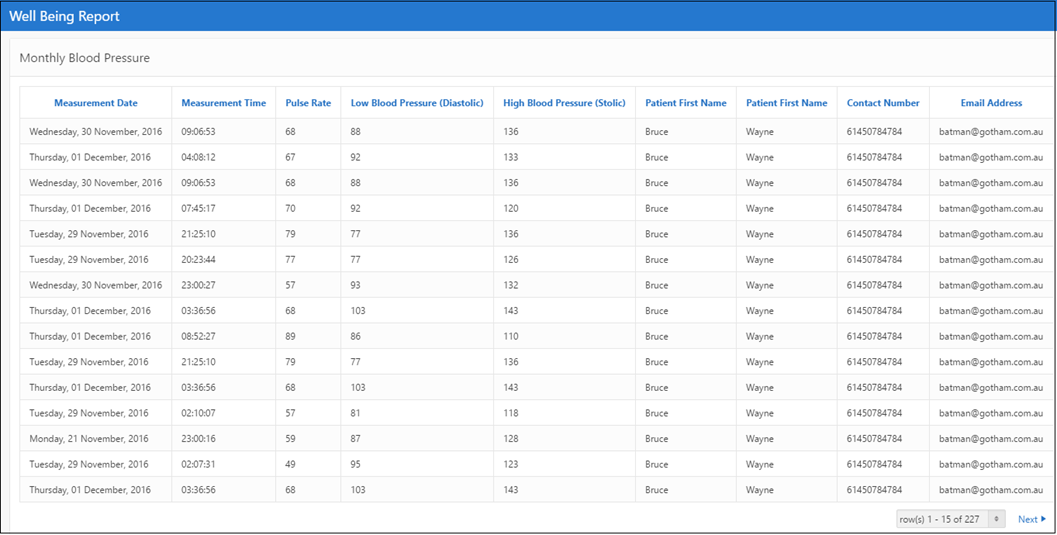

Of the many different reports that can be built to compliment the above solution, we built a few to aid the medical practitioners to dig into a patient’s health reading over a period of time to assess the abnormality over a time range. The following report created in Oracle Database Cloud Service (APEX) tabulates the reading of a patient over a month. This can be accessed as a link and by providing arguments from a PCS form link.

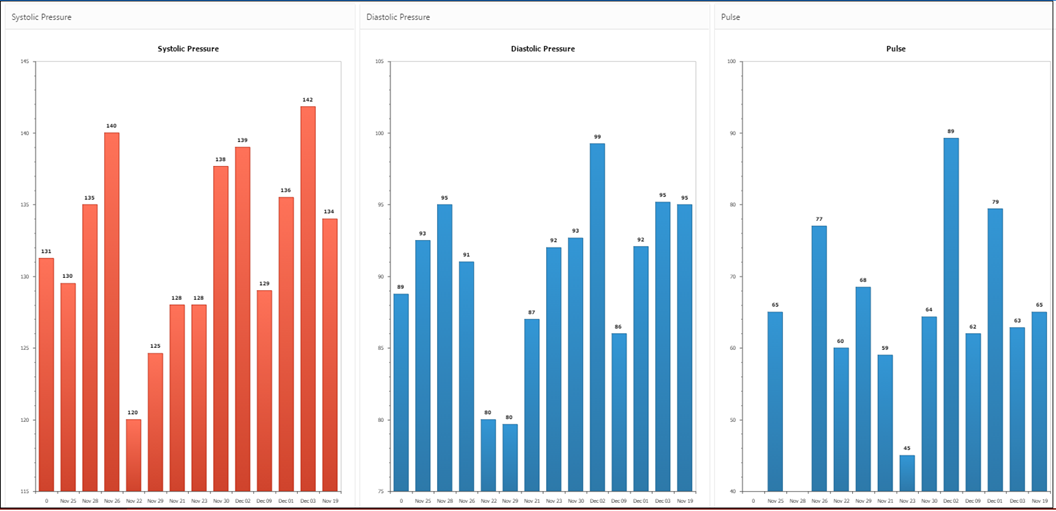

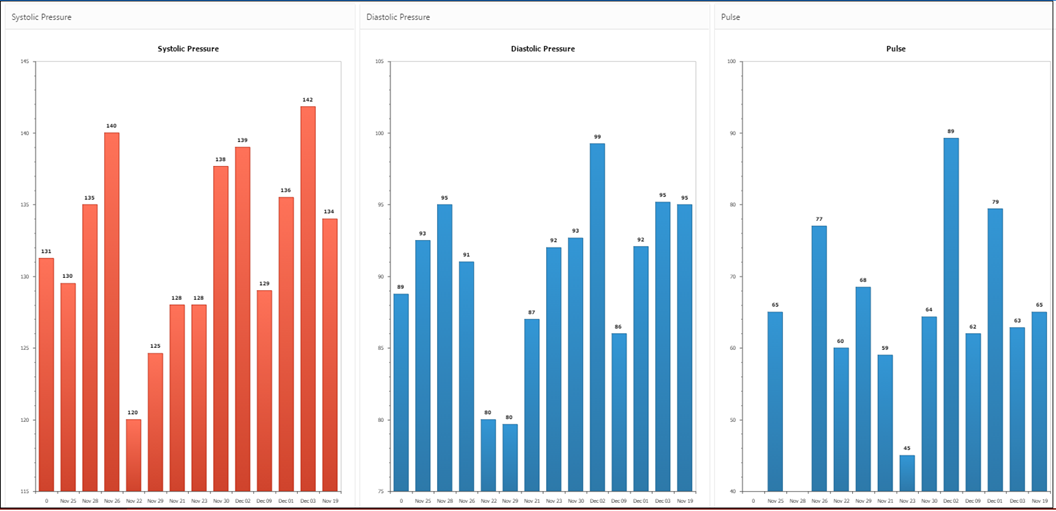

There can be various renditions of the above information the most common of which is a bar chart of systolic and diastolic pressure and pulse rate average each day over month. The histograms can visually highlight up or down trends in blood pressure over a period of time which can be useful to detect spikes/troughs over a baseline.

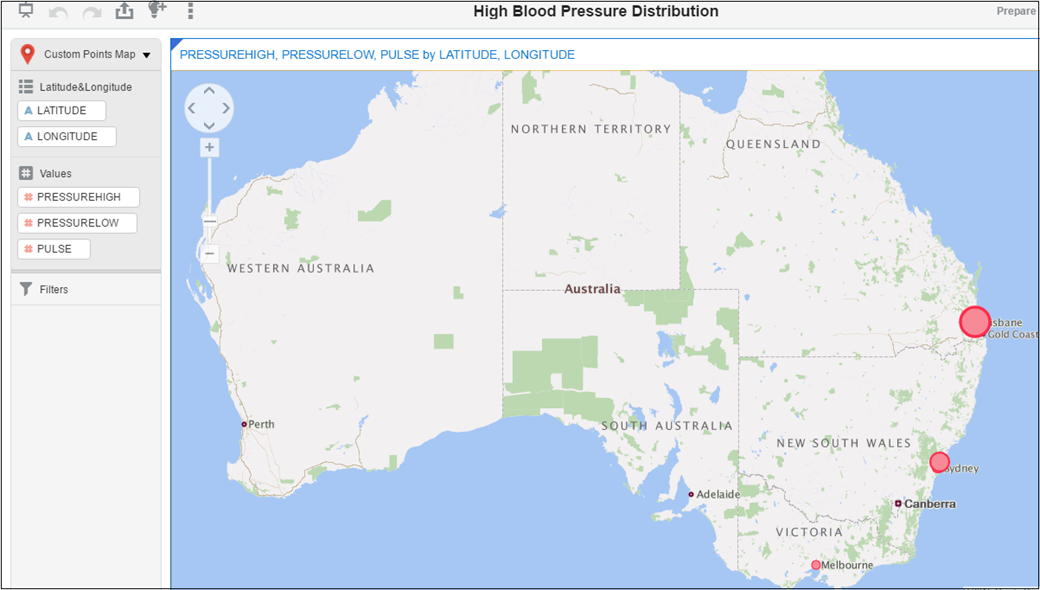

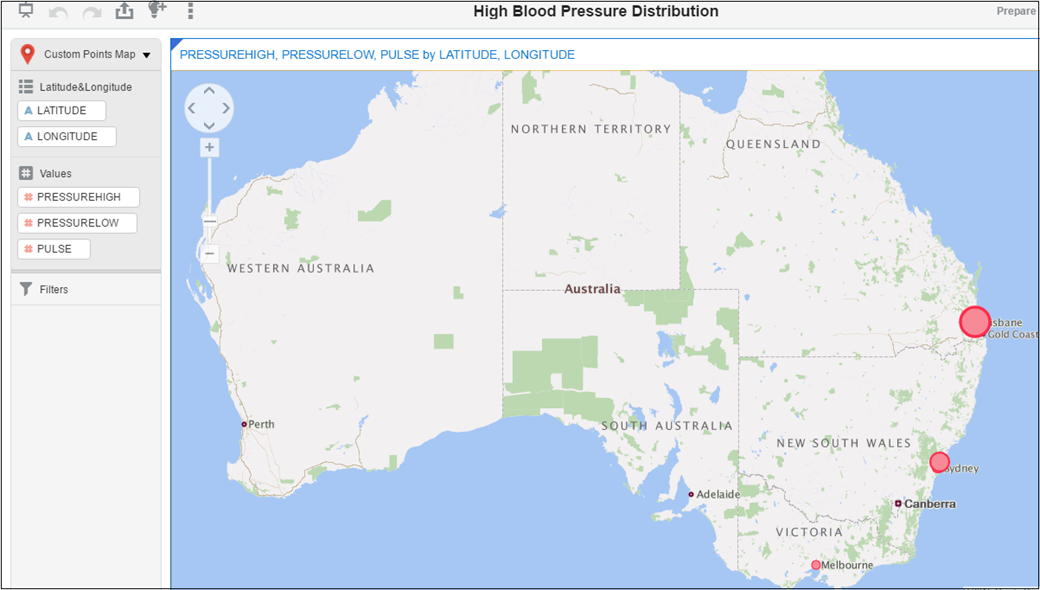

Anonymizing Patient Data

The last solution that we built to support our use case was to sanitize the patient health readings by removing personal and confidential data and presenting the information in a statistical way that could benefit national/state government health record keepers. How do we do this? Comes Oracle Data Visualization Cloud Service (DVCS) to our rescue. With the blood pressure reading streamed and sent to Oracle BICS from IoTCS, we built a Custom Points Map to the coordinates from where the readings were taken onto a geographical map. This resulted in a heat map showing regions/places with susceptibility to critical blood pressure patients. This sort of anonymized data is immensely useful for government health agencies to plan for aid work and improve the ability to respond faster to critical conditions.

To Sum it All Up

Transferring healthcare monitoring from hospitals to the home is disrupting traditional models of healthcare delivery. The positive implications of an IoT ecosystem in healthcare are numerous—from remote patient monitoring to predictive analytics—and can result in improved population health management. While this may seem like a futuristic daydream to those on the sidelines, leaders in the healthcare industry are already integrating IoT devices to improve healthcare. The speed to value at which use cases like the one covered in this detailed blog can be realized with little to no infrastructure setup cost using a combination of Oracle PaaS services is unprecedented.

There are a number of other potential use cases such as traffic incident monitoring, pest management, smart homes, smart parking management and other industrial applications that can be realized using a similar architectural pattern. The speed to value at which these solutions can be prototyped and productized using the Oracle PaaS Cloud service is remarkable and the Hackathon was great example of how complicated end to end solutions can be developer with ease.

If you would like to know more about the use case or technical details of the cloud services that we used, please feel free to leave your feedback or comments.